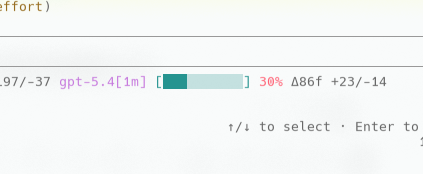

由于5.4是支持的,所以我用GPT5.5的时候都会在claude code默认使用[1m]

每次看

![]()

这个context window大小差不多到了27%再请求就会报错

stream error: stream disconnected before completion: stream closed before response.completed

看看CPA上的配额还有,不知道为啥,困扰了我好几天

后面发现原来GPT5.5现在codex上最多支持~272K

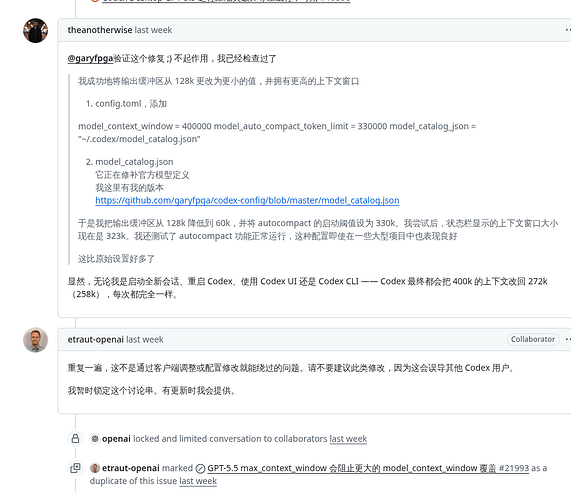

github.com/openai/codex Support 1M token context for GPT-5.5 in Codex 已打开 11:26PM - 24 Apr 26 UTC### What variant of Codex are you using? latest ### What feature would you lik…e to see? I want to give feedback on the current GPT-5.5 context limit in Codex. From the official announcement, I understand that GPT-5.5 in Codex is documented as having a 400K context window, while the API version supports 1M context. So this does not look like an undocumented bug. My concern is with the product decision itself. With GPT-5.4, Codex users could configure a much larger context window through `model_context_window`. For example: ```toml model = "gpt-5.4" model_context_window = 1000000 model_auto_compact_token_limit = 512000 ``` That made GPT-5.4 very useful for large repositories and long-running coding sessions. But with GPT-5.5, even though the model itself is supposed to be better at long-context reasoning and large coding tasks, Codex subscription users are now capped at 400K context. In practice, that makes GPT-5.5 feel like an upgrade in model quality but a downgrade in long-context usability inside Codex. I understand that 1M context is expensive. I also understand why 400K might be a reasonable default for most users. But I do not think it should be the hard limit for everyone. For advanced Codex users, especially those working on large codebases, the choice between compaction and full context matters a lot. Codex compaction is good, but it is still a tradeoff. During complex debugging, architecture analysis, or multi-file refactors, I would often rather spend more quota and preserve more raw context than compact earlier. A better approach might be: - Keep 400K as the default for GPT-5.5 in Codex. - Allow users to opt into larger windows, such as 512K, 768K, or 1M. - Charge a higher usage multiplier when larger context is enabled, similar to how Fast mode already uses a higher cost multiplier. The important point is user control. If a user prefers lower cost and faster compaction, 400K is fine. But if a user is willing to pay more quota for full-context runs, Codex should ideally allow that. Right now, GPT-5.5 is advertised as stronger for long-context coding work, but Codex subscription users have less access to that long-context capability than they had with GPT-5.4. That feels like a regression in one of the areas where GPT-5.5 should matter most. Please consider allowing advanced users to opt into 1M context for GPT-5.5 in Codex, even if it comes with a higher consumption rate. ### Additional information _No response_

不想使用compact, 所以又回去用GPT5.4了

gpt5.5 API是支持1m的, codex现在不肯上,超过272K计费是2x,估计算力紧张吧

1 个帖子 - 1 位参与者